Blog / Amazon Data Scraping: A Must-Have Strategy and Ultimate Solutions

28 Nov 2023

As the modern world moves into the technology era, it has become easier for individuals to discover and buy the products they want to purchase through online platforms. Some of the top options on digital platforms for individuals in their commercial operations are websites such as Flipkart, Alibaba, eBay, and Walmart. To optimize product visibility and offers, e-commerce sellers must employ data analytics to attract potential customers.

Nowadays, many customers use the Amazon platform instead of Google to find the routine products they're looking for. With about 37.6% of the US e-commerce industry, Amazon is a great tool for businesses looking to understand their target market better and make more informed business decisions. Hence, Amazon data scraping helps businesses obtain the necessary data suitable for their company, which benefits all parties involved.

Amazon is the source for all critical and valuable information on items, sellers, reviews, ratings, special deals, news, etc. It is advantageous for suppliers, buyers, and sellers alike to get data from the platform. Gathering information from Amazon may assist in reducing the expensive process of getting e-commerce data rather than combing hundreds of websites.

Amazon Product Data Scraping is the automated process of obtaining information and data about items accessible on the Amazon marketplace. This method entails gathering different information on items listed on Amazon, including product names, descriptions, pricing, photos, customer reviews, ratings, seller information, and more.

Amazon product data scraping aims to collect thorough and organized data from Amazon's website effectively. Businesses, academics, and people can use this data for various purposes.

The process of acquiring e-commerce information may be made less expensive by gathering data from Amazon rather than having to comb through hundreds of websites.

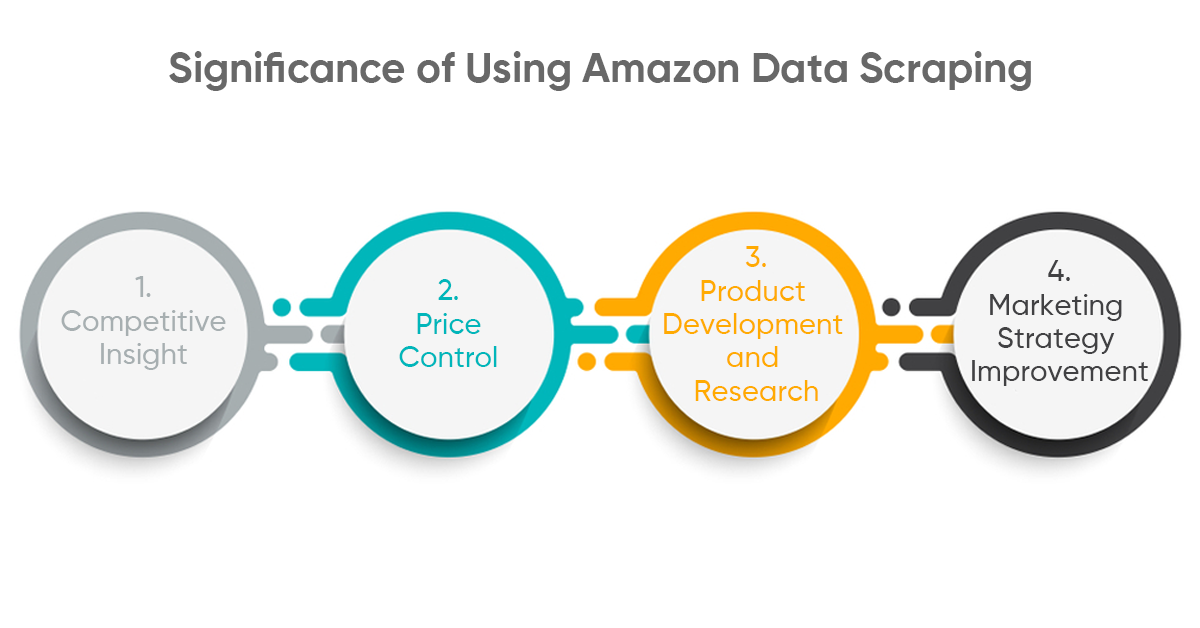

Here are a few benefits of data scraping from Amazon:

Amazon Data Scraping gives organizations real-time information about their competitors' strategies. Companies can change their approach and stay ahead of the competition by analyzing product listings, price structures, and consumer reviews.

Amazon's price swings are relatively typical. Regularly scraping pricing data allows firms to watch changes, optimize their pricing strategy, and remain competitive in a volatile market.

Businesses can uncover market gaps, analyze consumer preferences, and fine-tune their product development strategies to efficiently satisfy customer requests by evaluating customer feedback.

Data scraping allows organizations to customize their marketing tactics by understanding customer sentiment and preferences for optimum impact. Creating targeted marketing and optimizing product listings are examples of this.

In this expanding era of the digital world, there has been a dependency on online platforms for routine purposes. Businesses utilize online platforms like Amazon, which has massive data of various industrial segments, which helps stay competitive in this modern scenario. On the other hand, a few challenges come with data scraping through Amazon. Let’s discuss briefly the below-mentioned points:

Amazon's terms of service effectively prohibit the scraping. If the scraping is performed without proper authorization, it can lead to legal action from Amazon. So, adhering to Amazon's policies and guidelines to perform scraping is crucial. With authority, extracting and reproducing pricing strategies, product descriptions, or customer reviews can ensure the marketplace's integrity and protect competitors and consumers.

Amazon uses a very sophisticated anti-scraping mechanism to protect its data. To overcome this hurdle, one may be required to adapt and use advanced techniques to avoid detection constantly. The dynamic approach of the web page makes it difficult for traditional scraping tools to collect the data consistently. Also, Amazon uses a rate limitation technique to restrict access to a specific IP address to more than a preset limit in a specific timeframe. If anyone tries to violate that, it results in immediate IP blocking. To overcome

Amazon harbors a wealth of valuable data. It is essential to effectively navigate the challenges associated with the sheer volume and quality of information, which is crucial for businesses that employ data scraping strategies. Furthermore, Amazon keeps a massive amount of data. If you want to collect content for your company's purposes, you must understand that scraping large volumes of material can be challenging if you are doing it yourself.

Creating a web scraper without specific knowledge about the field will run for hours, and collecting hundreds of thousands of strings is challenging. Because Amazon is not like other websites, the site's algorithms are complex to scrape. The webpage is designed to reduce the practice of crawling.

One product may have multiple varieties, allowing clients to explore and select what they require quickly. Product variants are equivalent to the abovementioned patterns and are presented differently on the site. And, rather than being graded on a single product version, ratings and reviews are frequently aggregated and accounted for by all available variants.

Even though Amazon data scraping may seem alluring, it's crucial to understand the law to prevent consequences. It's essential to exercise caution because their strict Terms of Service prohibit automated access to Amazon's website.

The first step in ethical scraping is to abide by Amazon's robots.txt file guidelines. Following these rules guarantees that scraping operations take place inside legal limits.

In addition to being unethical, aggressive scraping techniques can cause server overload. Reducing the likelihood of discovery and fines can be achieved by implementing delays between queries and using a scraper that emphasizes courteous crawling.

The secret to evading discovery is to mimic human behavior. Scraping tools that rotate User-Agent strings mirror real-world browsing patterns, which makes it more difficult for Amazon to identify artificial scraping activity.

Privacy is essential when stealing information from Amazon. In addition to providing a certain level of privacy, using proxies to conceal IP addresses helps avoid IP bans, protecting against possible disruptions to scraping activity.

Suppose you frequently need to scrape data from Amazon. In that case, you may encounter several annoyances that prevent you from accessing the data, such as pagination, login barriers, IP restrictions, CAPTCHAs, and data in different formats. We've compiled a list of more potent tools that will help you address these issues:

Amazon provides API access to some data, allowing organizations to retrieve information in a structured and approved manner. Using API endpoints assures that you comply with Amazon's terms of service.

Implementing proxy rotation and changing user agents might help evade the discovery of anti-scraping methods. It guarantees that the scraping process is more seamless and unnoticed.

Due to the sheer volume of data on Amazon, effective cleaning and processing procedures are required. Using data-cleaning tools and algorithms aids in extracting helpful information while removing noise.

To reduce legal risks, firms should obtain permission or consent from Amazon before engaging in scraping activities.

Common Amazon data scraping mistakes to avoid

Failure to implement a robust IP rotation mechanism increases the likelihood of being noticed and blocked. It is critical for successful scraping to update and monitor IP addresses regularly.

CAPTCHAs are used to distinguish between humans and bots. Please address these issues to avoid scraping. A realistic solution is to use CAPTCHA-solving services.

Using languages like Python or Node.js to create bespoke scraping scripts offers unmatched flexibility for experienced users with specialized data requirements. It is possible to fine-tune custom scripts to extract the exact data required.

Gathering information from Amazon can assist in alleviating the expensive process of acquiring e-commerce data instead of scraping through hundreds of different websites. So, let’s understand the strategies to scrape the data hassle-free:

To remain ahead of anti-scraping procedures, scraping programs must be continually updated to ensure they can respond to changes on the Amazon platform.

Keeping an IP address rotation system in good working order is critical for preventing detection and blocking. Monitoring IP health guarantees that the scraping process runs smoothly.

Using different user agents simulates human behavior, lowering the danger of being detected as a scraper. It provides an additional layer of defense against anti-scraping procedures.

Scrapers should mimic human behavior to avoid detection. Consistent mouse movements, time on the page, and click patterns help to make the scraping process more authentic.

The technological features of web scraping become increasingly significant after the legal problems are addressed. Taking preventative measures can significantly boost the efficiency and success of your scraping operations. Let’s understand the techniques briefly to boost data efficiency:

The key to success is choosing the appropriate libraries and scraping tools. Browser automation tools like Selenium and Python modules like Beautiful Soup and Scrapy provide strong alternatives. Selecting the right tools for your scraping project depends on its complexity.

Use proxies and IP rotation to improve anonymity and prevent IP bans. By making sure that your scraping activity appears as organic traffic, this tactic lowers the possibility of being discovered and leads to further blockages.

JavaScript is used to load dynamic content on many current websites. Use programs that can render JavaScript, such as Puppeteer or Splash, to scrape such content. By taking this proactive strategy, you can be confident that all the data, including the dynamically created pieces, is captured.

Make advance plans for efficient data management and storage. Before scraping, choose the data structure, storage format, and backup plans. By taking this proactive measure, data loss is avoided, and further analysis is streamlined.

Amazon data scraping is a potent tool for companies seeking a competitive advantage in e-commerce. However, it presents its obstacles, including legal and ethical concerns. Businesses can effectively manage these problems by utilizing ultimate solutions such as API utilization, proxy rotation, and data cleansing procedures, allowing the gathering of essential insights without compromising integrity.

Feel free to reach us if you need any assistance.

We’re always ready to help as well as answer all your queries. We are looking forward to hearing from you!

Call Us On

Email Us

Address

10685-B Hazelhurst Dr. # 25582 Houston,TX 77043 USA